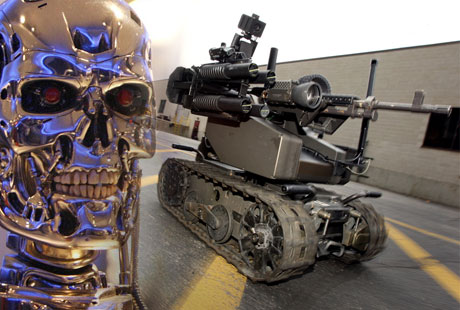

Robots Trained To Fire On People

Source: pddnet.com

It’s a Star Wars dream or nightmare depending on whom you side with. Personally, I’m going to have to side against anything proihuman annihilation. But it seems like the dark side always has the more advanced weaponry.

Among the many calls for action by the panel of industry experts August 5 at NIWeek in Austin, TX, a few tidbits of info caught my attention and caused spastic Terminator-esque doomsday scenarios in my mind.

I can’t be held responsible, the panel brought up the subject. Apparently, one question that Dr. David Barrett, director of the Senior Capstone Program in Engineering (SCOPE) at Olin College often fields, relates to human vs. droid futuristic scenarios that don’t stray far from the basic plot behind the Terminator franchise.

The subject sprang up as a result of a question from the audience that boiled down to the ethical debate in innovation. How do we know if/when we’ve gone too far? Would we know or would it be too late?

Essentially, the panelists stated that technology is a tool, and like any tool, it comes with great responsibility. Hearing the Yoda bubble to the top? Luckily, the panel brought it back down to earth by adding … but don’t be naïve.

As long as we have innovators striving towards the utopian greater good, we cannot refute the fact that others are working just as hard – if not harder – to counteract any good we try and add to this planet in our 80-odd years on board.

The crowd was coming to terms with the current cloud lurking over our sunshine and lollipop naivety when Ellen Purdy, enterprise director of joint ground robotics for the U.S. Department of Defense (DoD), appropriately added that the autonomous weapon is coming.

While Purdy stated that the DoD didn’t have a hand in financing or developing the project, she was aware of “robots trained [programmed] to fire on people.” Suddenly, I don’t feel nearly as qualified to battle a droid army as I did when my brothers and I pushed a battalion back into my father’s field and successfully defended the Eagle’s Nest with canes, bats and two sticks tied to the ends of a broken swing that served as a custom nunchuck.

The implications of the evil genius. I suppose that if we’re equipping kids with programming software along with their LEGO sets, it’s not too far of a reach to discuss a hobbyist who builds a robotic guard dog that snipes trespassers.

Specifics weren’t given, but the sometimes grim undeniable candor from the panel was chilling. Then again, are we not just as foolish when we turn a blind eye? When new ground in robotics, or any new technology, is broken, it’s ludicrous to sit back and say, “You know what? I have a good feeling about this vision system – I’m sure nobody would try to program it to recognize and annihilate a human.”

We have a divide when it comes to thinking whether or not we should when we’re gripped with the excitement of challenging ourselves to see whether or not we could.

When asked her opinion on the subject, Jeanne Dietsch, CEO and cofounder of MobileRobots, was concise. “Do we have anything to worry about?” The question echoed through the ballroom as we awaited her reply. She answered, “Yes.”

boston.com Robot Images

defensetech.org on drones

Source: pddnet.com