Is Moore's Law about to die?

Source: ca.tech.yahoo.com

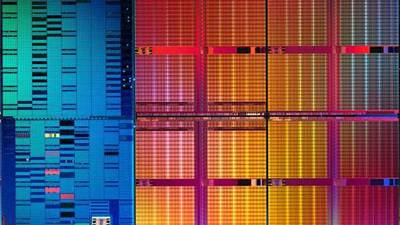

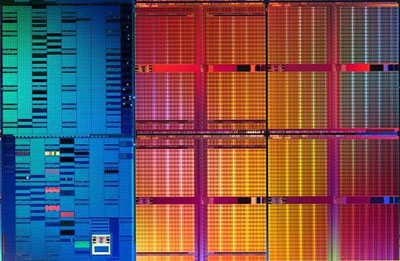

It's one of the most famous maxims in the technology world: Moore's Law, originally conceived by Intel's Gordon Moore in 1965, posits that the number of transistors on a circuit will double every 1 1/2 to 2 years. That has held true -- like a rock -- since it was envisioned, from the 2,300 transistors on an Intel 4004 to the 2 billion or so transistors on a quad-core Itanium produced today.

But even Moore has cautioned that the Law won't be sustainable forever. The limits of physics -- the size and characteristics of electrons that have to move through these circuits, for example -- mandate that at some point, we'll either have to stop shrinking transistors (which is how you fit more and more of them on a chip) or move to another form of CPU that doesn't rely on traditional silicon. Either way, Moore's Law would no longer apply. Intel itself has predicted the imminent end of Moore's Law on many occasions, though its most recent prediction is that there is "no end in sight."

Research group iSuppli would beg to differ with that opinion, and says that not only is the end in sight, it's right around the corner: By 2014, the company says Moore's Law will cease to drive chip design, and for a reason unrelated to physics. Rather, it's economics that will kill Moore's Law as we know it.

The big shift comes, says iSuppli, when companies shrink transistor nodes to below 20nm. The problem has nothing to do with the chips themselves, but the equipment that will have to be built in order to make the chips. Since that equipment is really only useful during the lifetime of a single chip generation, it has to be depreciated over the life of that generation. At the 20nm point, equipment "costs will be so high, that the value of their lifetime productivity can never justify it," according to the company.

We still have a bit of time for that economic reality to alter itself. Today, chips utilizing 45nm connectors are standard, with 32nm on the horizon (Intel could have these chips out in 2010), and 22nm the next step after that. 16nm (or possibly 18nm) would mark the following step in the progression, a point at which we'd then be in the world of "nanoelectronics" where individual atoms may have to be manipulated to construct a CPU. And some companies, including Toshiba, already have early production plans announced at this level, though on a limited scale in comparison to Intel.

Given the current state of the tech industry, economic reality is indeed a tough thing to get past, but it would be sad if money alone stopped chip advances dead in their tracks. Anyone want to take up a collection? Save Moore's Law!

Article from: YahooTech.com

Jim Elvidge - Are we Living in a Simulation, a Programmed Reality?

Jim Elvidge - The Singularity, Nanobot's & Reality Simulation

Jim Elvidge - The Singularity Will Not Occur, Programmed Reality & Infomania

Jim Elvidge - Programmed Reality, The Power of 10, Science & The Soul

The Universe - Solved!